|

And, will we know it when we see it?

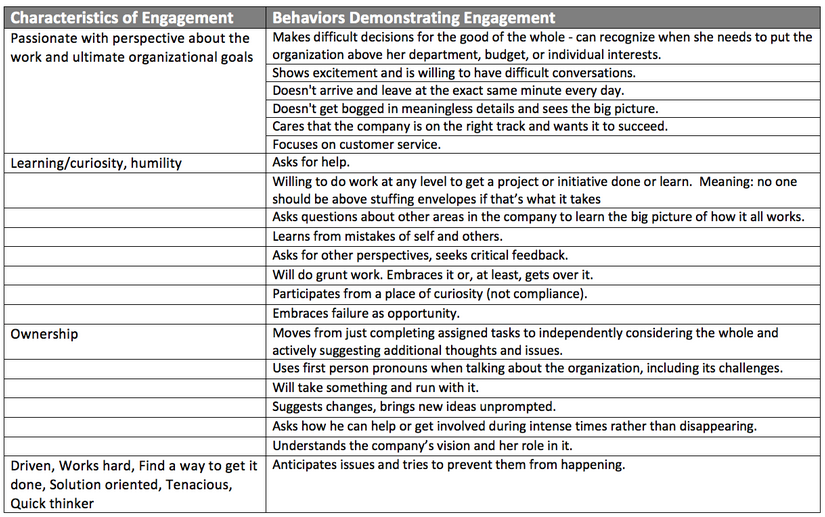

We throw terms like “employee engagement” into the middle of a conversation and watch all the heads start nodding “yeah, we need that!” But, we rarely take the time to actually define what it means specifically for us. Out of curiosity, I asked some colleagues from a variety of fields what “characteristics or behaviors” define an engaged employee for them. The response was kind of overwhelming, and many noted the question was “timely” for their work. The respondents included leaders from technology, law, financial services, real estate, sales, a barbershop chain, consumer product marketing, project management consulting, government, K12 education, higher education, fundraising, nonprofit and a few more. Here are their responses (loosely organized for easier consumption):

0 Comments

If you don’t really know why you are communicating, then your audience won’t really know why they should listen.

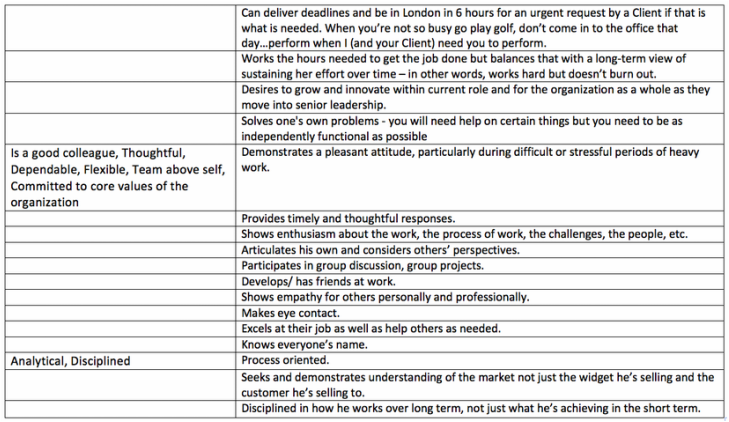

An effective communication strategy requires a mix of communication types, not only in terms of media, but also in terms of reasons why you are communicating. We typically overwhelm people with things we want them to know (whether they asked for it or not) and things we want them to do (whether they want to, need to, or not). These are largely transactional communications. And, despite their prevalence, when it comes to building a connection with your audience, “know” and “do” communications are the weakest forms (other than communicating to hear yourself communicate, of course). At some point, we need to make people feel and feel a part of (engage) what we are doing as an organization. After all, transactional communication will deliver transactional relationships. If we want something more, we need to communicate accordingly. During a workshop a few months ago, Michael Burcham said: “Every time you make a policy for something that is common sense, you take a little piece of everybody’s brain.”

I chuckled at the candor (and the image), but have been digesting it ever since. It seems to me that policy, best used, establishes a safety net for the organization (or a community of people of any sort). It sort of says: if our people or our actions fall below this basic level, or beyond this broad range of acceptable behavior, then there will be consequences. If there are uncertainties for the individual member where he needs guidance from the organization, there it is. In these cases, policy is intended to be behind-the-scenes and not an explicit part of everyday interactions (except for the HR directors and the like who manage them for the organization). Policy should provide a baseline, or set of parameters, that most successful employees don’t spend much time hovering around. But, for many, particularly larger organizations, instead of providing broad parameters, policy is perceived to define the accepted level of execution. It has moved from covering the organization for the worst-case scenario to codifying expectations of daily performance. So, people at a decisive moment defer to policy rather than their common sense. And, this, according to Burcham, is where we lose a piece of our brain, and (according to me) our soul. So, we must decide if we want policy-driven organizations or people-driven organizations (or likely an effective balance of both). The former leverages the tools of the organization, the latter the tools and creativity of all of its members. The former slows and systematizes organizational function, the latter helps it remain nimble and open to new inputs. Either in the extreme exposes the organization to a different set of threats. Which brings me to another quote from Burcham that day: “Your people should grow at a faster rate than your company.” So, there is the real challenge! When you look at your company or your organization, are the people who make it up growing faster than the entity as a whole? Are they pushing you for new opportunities for personal growth? Can they execute without micromanagement? Do they surprise you with their problem-solving? Are they generating innovation and developing ideas to drive you forward? Or, are they waiting on direction? Acting only if policy is there to guide them? If it’s the latter, they may have experienced a policy lobotomy. Organizational culture and communication are ubiquitous; both inputs and outputs that persist beyond operational or organizational silos. They exist whether they are invested in or not. They actively define the nature of the system whether they are acknowledged or not. They are the DNA.

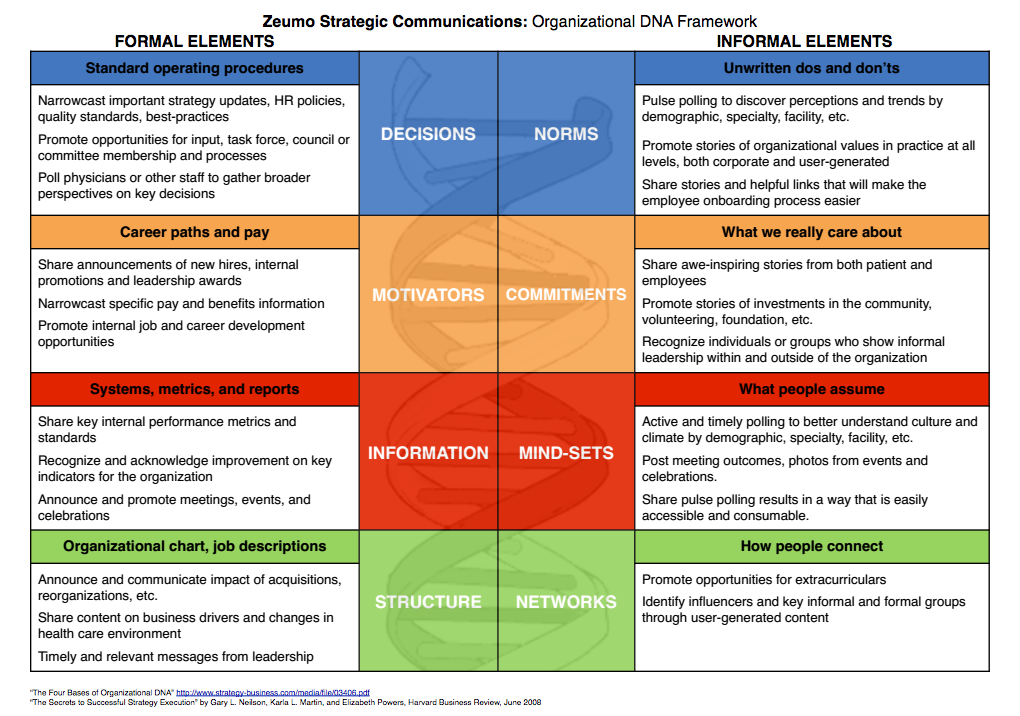

So, I have taken a stab here at showing how you might apply the Organizational DNA Framework to a more strategic internal communication plan, that Zeumo can help you deliver, of course. It seems like every moderate-sized or larger organization has one (at least one). It’s that department or that leader who for the sake of covering their own backside, and ostensibly that of the organization, is far more incentivized to say “no” than “yes” (or even “maybe” for that matter).

In some places it is an entire department. It’s HR; it’s legal or compliance; it’s IT. (There are certainly more. These are just the ones I have experienced.) Everything in the design of these departments, in their incentive structure, in the skills of their people, in their intended, or de facto, role in the larger organization leads to “no.” And, technically, there’s nothing wrong with it. They keep the organization out of trouble. They keep it doing things that they know are already acceptable, safe, compliant, evidence-based, industry standards. In other places, it’s a particular leader. The psychoanalysis of this type of leadership is far too complex for this blog, but please just note the issue. In terms of innovation in your organization, the result is the same. Status quo. The chronic “no” problem for your organization is strategic, and strategy should be forward-looking. Is the rote answer “no” helping your organization get where it needs to go? Does “no” help you innovate? Does “no” keep you nimble and adaptable to inevitable changes in the field? Does “no” help you listen to your employees more openly? Does “no” help you listen to your clients? Does “no” help you try new technologies? Probably not. (Some might even say “no.”) For all of these to happen, the organization must be willing to take on risk, to expose itself to what it doesn’t know, not merely cover its backside based on what it does. My goal here is to raise a flag for organizational leaders, not to bash these departments (although maybe to highlight those all-“no”ing individuals). Leaders, do you have a “no” department? Be honest. Do you know if you have a “no” department? Be honest! Do you have a “no” man or woman? What it boils down to: Is your organization strategically aligned? Have you instilled all of your departments with the people, the power, and the shared vision to help move your organization forward? Do your leaders share the same vision and have the right skills, dispositions, and incentives to take you there? If you are not aligned, then the “no’s” may cover you for now, but bury you in time. Strategic information in organizations can be paired down to two primary types: 1. what I (the employee) want or need to know, and 2. what the organization wants or needs me to know. Sometimes they are the same. Often they are not.

In fact, if they are the same, you probably have a strong culture and decent communication strategy, articulating a clear and engaging message and delivering it effectively. But, for many of us (if we are honest), that sort of alignment is still an aspiration. Often organizations are predominantly either want-to-know organizations or need-to-know organizations. Want-to-knows pride themselves on being highly participatory and democratic. They want their people involved and they believe in the knowledge of their people to drive their work. Anything “top-down” can even be taboo. Need-to-know organizations invest specifically in top-down models of information flow. Given the “right” information, their people will execute and the end result will be effective and efficient performance. The premise, although probably packaged differently, is: “we’ll just tell you what you need to know.” In the extreme (which I have seen both), neither works. Want-to-knows can be so “organic” and participatory that the direction of the organization is perpetually unclear, and roles and responsibilities (even deliverables) become uncertain. Everyone is involved but little gets done. Despite the intention of building a positive and engaging culture, frustration slowly brews as clarity and execution wanes. Alternatively, need-to-knows risk alienating their people and losing the leverage of their “local” knowledge. As people adapt to narrow sources of information from the top, their confidence in themselves and those closest to them breaks down. Deference to others for decision-making sets in. The organization becomes inefficient and slow to respond to its environment. People stay really busy but don’t seem to get much done. So, if you build a communication strategy, whether for an entire organization, a division, or a department, you have to be courageous enough to let your people speak and smart enough to listen for understanding. You also have to be bold enough to have a clear voice and articulate a compelling direction for your people to rally behind. Your organizational information flow is constant; whether or not it is strategic or qualifies as communication is up to you.  In business, and increasingly in philanthropy and other areas, we want to know the return on investment (ROI) when we consider a new technology, a new practice, or even a new policy. And, in some cases, this is an easy measure and a direct correlation. A new piece of equipment purchased saves X amount of time, increasing productivity and/or decreasing costs by Y dollars. Wise leaders understand, however, that returns aren’t always that direct. Investing in products or services that enhance a company’s culture or improve its communication requires a broader understanding of “return” and typically requires a different time horizon and calculus for measurement. Employee retention, talent recruitment, employee affinity and resulting productivity, trust in your company, quick access to organizational knowledge, less time wasted managing emails resulting in lower employee stress, organizational goodwill: these returns are often difficult to put a dollar value on, or to correlate to a singular investment or action by the company. Yet, their benefits impact nearly every interaction within our company every day, and ultimately find their way to the bottom line. They build the workplace, not just the work. Good leaders know these kinds of returns are critical to company success. And, focusing on them may just require a different question: what is the consequence of NOT investing? What are the hidden costs when we fail to invest in culture? What are the efficiencies we will never know if we choose not to invest in better communication? What are the wasteful work-arounds we cannot even see but that are already in place as a result of our failure to invest? Explicit returns on investment in culture and communication are often nebulous, and the fact that they cannot be clearly defined too often means we don’t invest in them. It’s a self-reinforcing loop that cuts at the heart of our companies and challenges our leadership, our productivity, and impacts our bottom line. While writing this blog, I came across an article in the New York Times that included the following quote: “(In our research) We often ask senior leaders a simple question: If your employees feel more energized, valued, focused and purposeful, do they perform better? Not surprisingly, the answer is almost always “Yes.” Next we ask, “So how much do you invest in meeting those needs?” An uncomfortable silence typically ensues.” The article goes in depth in exploring this contradiction of acknowledged value and persistent lack of investment. Although long, it’s definitely worth a read. At the most basic level, however, I think we need to start by changing the question, or at least asking another question. Instead of only asking about the ROI, we also need to ask about the consequence of not investing (CNI).  In a variety of conversations, workshops, and planning sessions over the years, whether around technology adoption, organizational culture, or school climate, I have referenced the following change model out of Harvard: Change = Dissatisfaction x Vision x Plan Why is this simple model so powerful? At the most basic level: what happens when you have a zero for any of these elements? No change. It’s simple multiplication, but profound in that so many of our traditional change efforts are built on addition strategies. If we do this, and then we buy that, then it will add up to change. But, addition alone doesn’t generate real change. Change is multiplicative. The elements necessary for change are interdependent and are thus magnifiers of each other. So, why do we struggle to pull this simple multiplication together on some of our most persistent organizational, educational, and community issues? After all, we have built countless strategic, community, and organizational plans. We have crafted mission and vision statements for our organizations, collaborations, task forces, committees, and initiatives. We’ve brought in trainers, consultants, data wonks, and various other experts from out of town. We have invested millions to develop, implement, and evaluate new models and new technologies. The list goes on and on. No real change. Applying the Harvard Change Model to largely fruitless visioning and planning efforts, we are left only to reflect on our dissatisfaction. Now, it may seem ludicrous with all of the time, money, and effort we have invested into change to wonder if we are really dissatisfied. All those meetings…all that discussion…all those plans…the surveys…the focus groups…the new technologies…surely, all of that is proof we are dissatisfied, right? But is it? In expressing our dissatisfaction (and creating our visions and plans), we too often focus on the work of others. Or, perhaps, if we do focus on the real problem, we never do the ugly part of identifying how we individually are complicit. The “problem” then is this thing that just exists, but somehow isn’t created by us through our own choices and actions. We all join the chorus saying “something’s gotta change” with an implicit “but it’s not me”. So, if a critical mass is not truly dissatisfied with our own work (and not just the work of others) then the real dissatisfaction required to generate change doesn’t exist. There is only frustration, blame, and subsequent defensiveness (and a lot of failed visions and plans). If all of us who claim dissatisfaction, whatever the issue and wherever we work, actually changed our own practices, I wonder if it might add up to something?  Years ago, while commiserating about limited access to higher education for low-wealth students, a colleague offered a thought: “Every system is perfectly designed to deliver the outcomes it delivers.” If you think on that for just a moment…(go ahead, do it!)…it’s both painfully obvious and painfully…well…painful. But, for anyone working to change the outcomes that are important to them in education, politics, justice, or otherwise, this simple statement tells us where our efforts must be directed: the systems that we have, advertently or inadvertently, designed to underperform (or to perform exceptionally toward outcomes we never intended). Under this premise, the school system that is struggling with dropouts is perfectly designed to generate those dropouts. The justice system that incarcerates men of color at dramatically higher rates than anyone else is perfectly designed to incarcerate men of color. The political system that generates corruption, gridlock, and weak candidates is perfectly designed to do just that. System performance is not the sum of its individual elements. It is the interrelated (systemic) performance of its elements. Systems get misaligned because we build and invest (or disinvest) in them element by element often over long periods of time, and amidst shifting values and visions. And, the more we address individual elements in isolation the more likely we are to create systemic dissonance (the type of boiling-frog dissonance we actually grow to accept). Within an organizational system, for example, perhaps we have rewritten our values statement, but our organizational structure is out-of-date or even arbitrary. We revisit our investments (budget, people, etc.), but align them with our organizational structure rather than our strategy (this is my new definition of bureaucracy, by the way). We clarify and document our desired outcomes, but we maintain old strategies that have lost relevance in a changing environment. We improve our product or service delivery, but never invest in our human capital pipeline to support and sustain it. When we see systemic failure, we cannot blame the system without owning our role in it. We cannot claim that our part of the system is working, and it’s everyone else’s that’s broken. We cannot do fragmented and narrow work and believe it will add up to a healthy system. It won’t. If we are going to create the system that is perfectly designed to deliver the outcomes we actually want, we need to design, invest, and lead systemically.  It seems educators, reformers, and advocates everywhere are committed to the idea of “data-driven decision-making.” Presumably, this term and its popularity are outgrowths of increased visibility and accountability in public education along with the rapid growth of the role of data in other parts of our lives. And, let’s be honest: it sounds good. It makes us feel secure. It sounds really smart. And, if done well it probably could be transformative. In order for data-driven decision-making to have much meaning, however, we need to maintain a critical eye and keep asking questions (let’s at least try to keep it from being pure jargon anyway): DATA … what’s data and what’s not data? The brands that survey us and track our online and buying patterns never really ask if the data they see can be verified by a nationally recognized higher education institution. It doesn’t matter. It’s data, and they use it for what it is. In education, we need to get the idea of data out of the clouds (only data wonks understand it), out of institutional paradigms (data-driven and evidence-based are not synonymous), and demystify it a bit (every interaction with another human is full of data points). Data isn’t just delivered to us from the researchers or the “data people” at central office. We don’t need a published report or a study to have and use data. When we talk to a student and ask what she is interested in, what her concerns are, how she is feeling: that’s data. We just need to ask. If you ask 30 students at your school if they have been bullied in the last month and 15 say yes, you have data that suggests a bullying problem – University X doesn’t need to confirm it. DATA … WITH PEOPLE … Is the data merely accessible or is it actually consumable? In its current state, data in education feels too complex, distant, and obtuse. I am a reasonably educated guy, and when I look at some of the data and spreadsheets that actually get shared from time to time with students and parents and even with teachers more frequently, my eyes go crossed. And, when I think about indicators like school climate that might actually be helpful in real-time, there’s no good data being captured. Because it makes sense in a database – or to someone building a database – doesn’t mean data makes sense in the hands of those who are supposed to interpret and use it. Because we can report on it annually as a school system or community and say “yep, we track that” or dig it out occasionally for a grant means little to its usefulness in our daily work. In fact, data that is six clicks deep in a database or learning management system should probably not even be considered available to most users. It’s not consumable anyway. Data-driven decisions start with our ability to process data in terms we understand and in the context of decisions and actions we actually control. DATA…WITH PEOPLE…WITH DECISIONS TO MAKE Who are the deciders and what do they get to decide? As you might guess from my previous writing and work, I wonder, in particular, where our students are in this conversation. In my experience, students generally don’t have much say on important issues in their schools (I am being gentle here). So, obviously, school data isn’t something we discuss with them. Instead, we treat students merely as data providers not data users. Meanwhile, they collect and analyze data everyday and in every interaction and use it to make important personal and relational decisions. But, there is a challenge here for teachers and other staff too. Most teachers I talk with view data as more of a tool of external accountability than professional process and continuous improvement. And, often the data they are accountable for reflect variables they have little-to-no control over, particularly in the short term. So basically, 1.) they have access to data that doesn’t relate to their actual realm of decision-making; and/or, 2.) they are trying to make decisions (and someone wants the data that supports it) and they don’t have it. But, if data-driven decision-making is critical to the improvement of schools and development of communities, shouldn’t it be critical (available, consumable, and relevant to decision-making) across all stakeholders? If the range of consumable data, data usage, and the related (or unrelated) decision-making processes are narrow, unclear, or inconsistent, then we can be pretty sure our data-driven outcomes will be as well. Like anything else, the data on data-driven decision-making will likely reflect the quality of implementation not the idea itself. |

Categories

All

Archives

April 2024

|

RSS Feed

RSS Feed